Deep Learning for Jazz Walking Bass Transcription

This is the accompanying website for the paper :

- Jakob Abeßer, Stefan Balke, Klaus Frieler, Martin Pfleiderer, and Meinard Müller

Deep Learning for Jazz Walking Bass Transcription

In Proceedings of the AES Conference on Semantic Audio, 2017. Details Demo@inproceedings{AbesserBFPM17_JazzBassDeep_AES, author = {Jakob Abe{\ss}er and Stefan Balke and Klaus Frieler and Martin Pfleiderer and Meinard M{\"u}ller}, title = {Deep Learning for Jazz Walking Bass Transcription}, booktitle = {Proceedings of the {AES} Conference on Semantic Audio}, address = {Erlangen, Germany}, year = {2017}, url-demo = {https://www.audiolabs-erlangen.de/resources/MIR/2017-AES-WalkingBassTranscription}, url-details = {http://www.aes.org/e-lib/browse.cfm?elib=18762}, }

Abstract

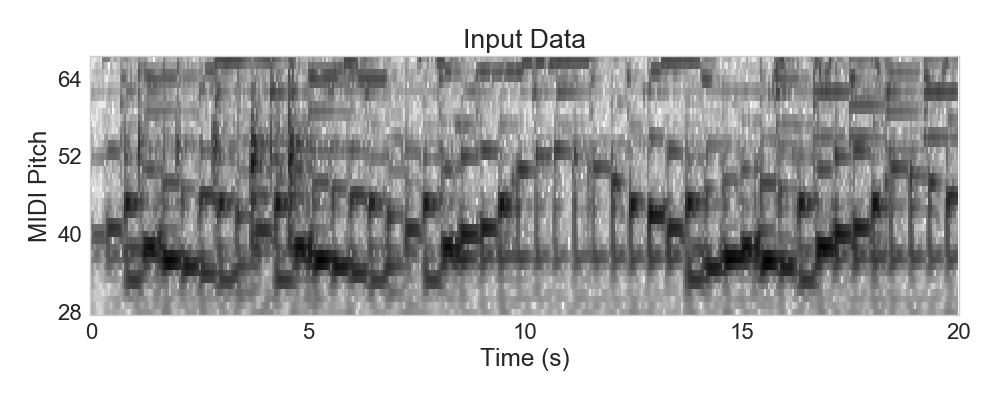

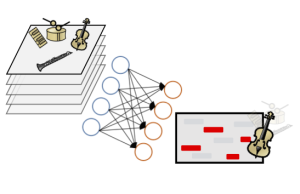

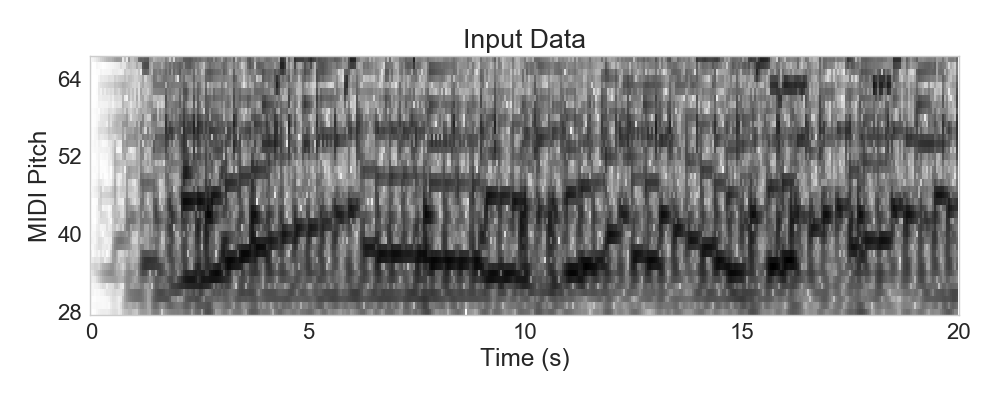

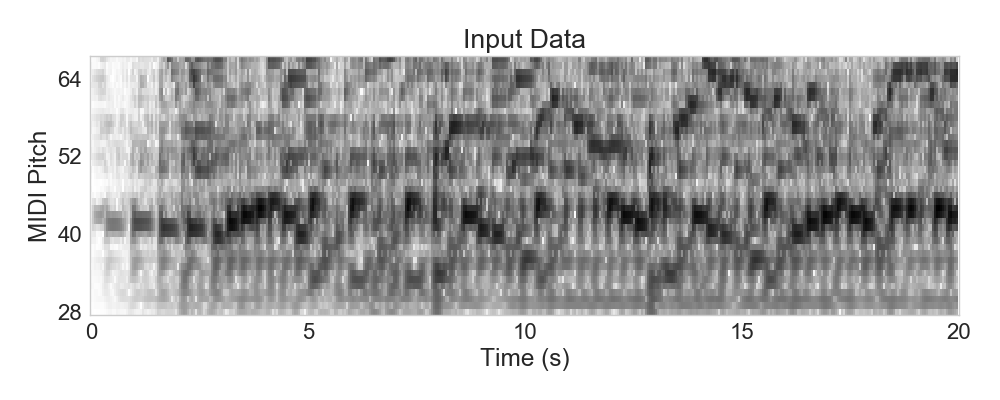

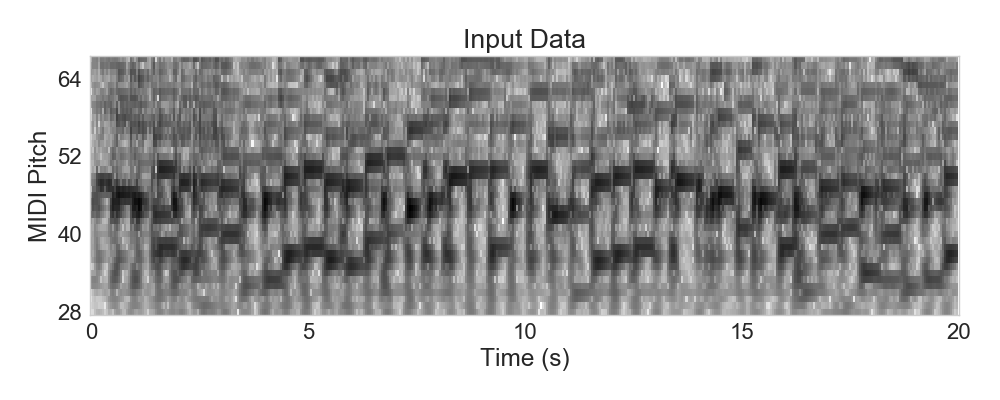

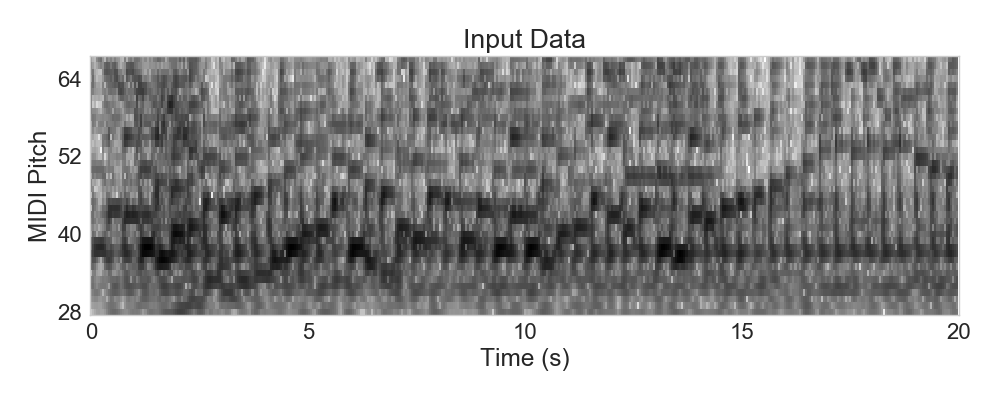

In this paper, we focus on transcribing walking bass lines, which provide clues for revealing the actual played chords in jazz recordings. Our transcription method is based on a deep neural network (DNN) that learns a mapping from a mixture spectrogram to a salience representation that emphasizes the bass line. Furthermore, using beat positions, we apply a late-fusion approach to obtain beat-wise pitch estimates of the bass line. First, our results show that this DNN-based transcription approach outperforms state-of-the-art transcription methods for the given task. Second, we found that an augmentation of the training set using pitch shifting improves the model performance. Finally, we present a semi-supervised learning approach where additional training data is generated from predictions on unlabeled datasets.

DNN Architecture

We used keras to train our DNNs. All details regarding the architecture can be found in the model.json. The training is described in the paper. If you have any questions, please do not hesitate to ask!

Code for making predictions is available on GitHub.

Example: Art Pepper - Blues for Blanche

Example: Ben Webster - Night and Day

Example: Bob Berg - I Didn't Know What Time it Was

Example: Chet Baker - Let's get Lost

Example: Clifford Brown - Joy Spring

Example: John Coltrane - So What