Head-Orientation Compensation With Video-Informed Single Channel Speech Enhancement

S. Chakrabarty, D. Pilakeezhu and E.A.P. Habets

Published in the Proc. of the International Workshop on Acoustic Signal Enhancement (IWAENC), China, 2016.

Abstract

It has been shown that human speakers do not radiate voice sound uniformly in all directions and that the radiation pattern is frequency dependent. As a consequence, the quality of the speech signal acquired by distant microphones depends on the relative orientation of the head with respect to the microphone. In this paper, a single channel speech enhancement framework is proposed that incorporates the head orientation information to compensate for the reduction in sound energy due to the relative orientation of the speaker with respect to the microphone, while attenuating the noise. In the proposed framework, the head orientation at each time instance, which can potentially be estimated using computer vision techniques, is used to compute the frequency dependent gain factor that needs to be applied to compensate for the head orientation. The computed gain is then incorporated in a single channel filter which simultaneously suppresses the noise. Based on experimental evaluations, with both simulated and measured data, we demonstrate the ability of the proposed system to improve the quality of the acquired speech signal.

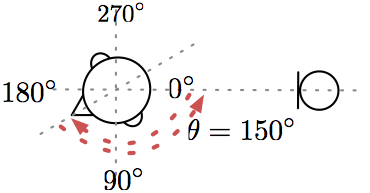

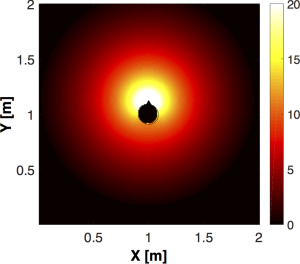

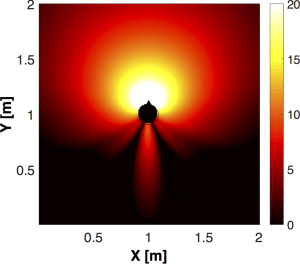

Sound Radiation Pattern

An illustration of the dependence of the acoustic transfer function (ATF) on the head-orientation as well as the frequency is shown in the above figure. In the figures, the energy of the ATF between the mouth and a microphone as a function of microphone position, for a simulated scenario in an anechoic environment, at frequencies of 100 Hz and 3 kHz. It can be observed that at low frequency the radiation pattern is omnidirectional with the head having little effect, whereas at 3 kHz, the effect of scattering due to the head becomes more significant, as the energy at the sides and the back of the head is reduced.

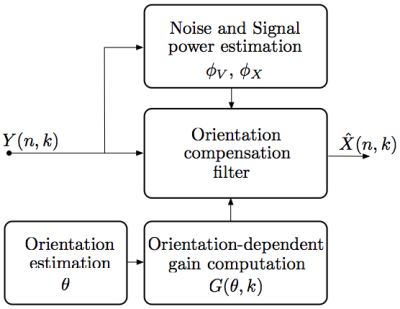

System Overview

Sound Examples - Measures RIRs

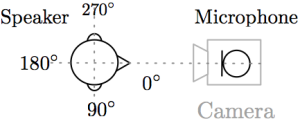

Experimental Setup:

- Room size: 4.55 m x 4.45 m x 2.55 m

- Source-microphone distance: 1 m

- Reverberation time: 0.17 s

- Stationary white noise with iSNR = 20 dB

- STFT parameters: 16 kHz sampling rate, frame length of 1024 samples with 50% overlap

KEMAR Dummy Head used for recording and the recording setup